Using AI and the human eye to spot social media posts fueling youth violence

The last thing Gakirah Barnes wrote on social media was “In Da End We DIE.” Four days later, she was killed.

Her last post attracted national attention as the public discovered Gakirah’s social media handle and read through the posts of a young woman who was a gang member and who allegedly shot and killed up to 17 people. Today, nearly seven years after her death, Dr. Desmond Patton, a social worker and associate professor at Columbia University, says he has developed a method to interpret the messages Gakirah left behind.

“Gakirah had so much knowledge about violence and trauma,” Patton says. “She could have used that knowledge to help people.”

Now, Patton is using that research to advocate for a more thoughtful response to discussions about policing social media. Where some might see violence and aggression online, Patton sees a cry for help. With a combination of artificial and human intelligence, and with the input of ex-cons, Harlem pastors and former gang members, he is studying social media posts like Gakirah’s to learn how to respond with therapies, not arrests.

At SAFE Lab, his research is being used to examine loss, bereavement and Black digital grief. It’s also part of a new project asking Harlem natives about how they’re experiencing COVID-19 and resistance to anti-racism.

“So much grief is unfolding on social media,” Patton says. “How do we use Black social workers to gain some skills to identify signals of grief and loss?”

A social worker’s approach to social media

Online communities overrun with stream-of-consciousness testimonials caught Patton’s interest in 2012 when he realized that they weren’t being studied for community mental health.

Initially, Patton thought he might track the language of users prone to violence and learn how social media could perhaps predict off-line violence. But the life and death of Gakirah changed his mind and his tactics.

“I realized we were asking the wrong questions of the data,” he says.

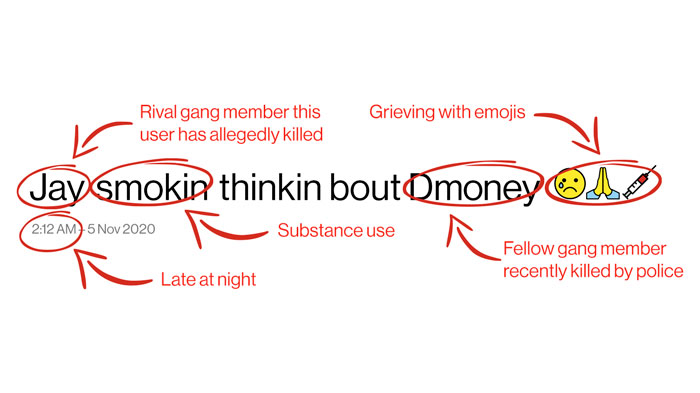

As Desmond and researchers developed algorithms and AI to crawl social media posts and potentially find language that could lead to violence, he realized an important element was missing. Without human intelligence—people who knew Gakirah, her community and her pain—providing the context of the posts, they could be easily misinterpreted.

To better understand Gakirah’s tweets, Desmond hired young people from her neighborhood to help with the research.

“We would see a post that sounded violent, and we would talk to a person from that neighborhood and they would see a whole new world,” he says. “That human context changed how we labeled the data.”

The emojis of grief

By the age of 17, Gakirah was active on social media, allegedly part of a gang in Chicago, and openly bragging about killing 17 people on Twitter. She was a bit of a Chicago online celebrity, appearing in local rap videos waving guns.

“Post after post, she would peel back the onion of her life,” Desmond says. “You saw her as a human being. But everyone latched on to the negative, instead of asking, ‘Why was she in trauma in the first place?’”

His research has determined that violence often begets violence, but retribution can be interrupted. Social posts about traumatic losses often follow a pattern. Time and time again, Desmond says, it took two days for grief to turn into anger and vengeance.

The circumstances and patterns are not concrete, but generally one gang will be grieving a loss while the other side is gloating, and from there it usually takes about two days for the situation to percolate into an act of aggression.

Gakirah was no exception. Many of her close friends were murdered. Ultimately, the self-proclaimed assassin died within days of writing about the overwhelming grief of seeing too many of her friends in caskets. She was shot nine times.

The reality is that, for many, social media is just a means to have a conversation and be heard. Gakirah wasn’t one-dimensional. Friends said she could be goofy and funny. But you have to find other sources, like that, to learn about her.

“This is why my work keeps me up at night.”

“We’re sold this idea that the algorithms are not biased,” Desmond says. “But the realities are, humans are.”

The answer is to create a digital community of people who can do the computing as well as provide the context.

Desmond’s is working to bring together social workers, youth, computer scientists, formerly incarcerated individuals and motivated community members who collaborate on developing technology tools to support gang violence prevention.

The team works like digital archeologists, sifting through posts and working closely with community members to understand what’s happening. The technology his team is collaborating on will always require a human eye, but the training his group provides will one day change the conversation. Comments that could be grossly misinterpreted will have new context.

Writing a new digital manifesto

Initially, Desmond started his research looking for a way to stop violent incidents. He set out with the intention to use AI for “computational interventions,” that is, intervening in real time when young people are using language and emojis that raise red flags.

“That’s what we wanted to do. We have not engaged because of the ethics,” he says. “We’re only developing the science and the tools. We’re not deploying, we’re creating conversations.”

Desmond is now working to create a healthy online community and assist with starting beneficial conversations with youth, not policing them.

“How do we work with young people to create policies—manifestos,” he says, “that say ‘We’re OK with protocols on social media that keep us safe’? What they don’t want is for adults to misinterpret what we say.”

The goal is to rethink what a development of AI can look like, Desmond says.

“And we may learn that AI is not good for identifying violent language, but it’s good for finding resources.”

Could AI and a trained professional have changed the trajectory of Gakirah’s life? Desmond thinks it takes more than AI.

“She forced me to look at it differently,” he says. “I don’t think people go to the next step and listen to social media posts. Listen with your eyes. Ask yourself, is there more? Do I understand this story? Is there more context?”

Know someone who’s creating positive impact using technology and connectivity? Send us a message at story.inquiry@verizon.com. They could be next in our story series.